The Internet On The Shop Floor: Let's Keep It Safe

Use of the Internet on the shop floor brings many benefits, but they can be offset by concurrent security risks. (2002 Guide To Metalworking On The Internet)

The Internet has arrived on the shop floor. For corroboration, just browse any publications about industrial automation. The realization soon hits—not only has it arrived, but it has arrived in force.

In the 2001 Modern Machine Shop Guide To Metalworking On The Internet, James R. Fall, president and CEO of Manufacturing Data Systems Inc. (MDSI) (Ann Arbor, Michigan), wrote the article "What Does It Take To Internet-Enable Machine Tools?" in which he discussed why and how to enable shop floor data collection using a software-based computer numerical control (CNC) with a database at its core. (This article may be found here). The volume of current interest in this area points indisputably toward a rapid proliferation of Internet connectivity in factory applications that was unthinkable less than a decade ago.

The adoption of Ethernet networks in factory applications fuels and coincides with that rapid proliferation. Room abounds to debate whether Ethernet is appropriate for all factory networks, but surely no one would continue to argue that a network technology with office automation roots is not appropriate for the shop floor. Its adoption has been rapid, driven by installed cost advantages over proprietary networks plus easier integration with business systems.

All major providers of industrial networks and automation equipment now support Ethernet connectivity and some form of Internet access (See sidebar at right). The obvious hot trend now is to go the next step by offering World Wide Web connectivity to shopfloor devices.

Background

In the last 3 decades, the semiconductor industry has had the astonishing record of doubling processor computing bandwidth about every 2 to 3 years. Upon this technological foundation, three forces merged to bring us to the current state:

1.Development of the Internet

2.A very strong push by major end users of industrial automation for the adoption of standards-based, open systems

3.The emergence of the Web, with its attendant multiplatform, browser-based user interface.

Ethernet and transmission control protocol/Internet protocol (TCP/IP) can be traced to the first driving force. The second brought an abortive journey through manufacturing automation protocol (MAP) prior to the merger of industrial and office networks that is now under way. Today, the third driving force is enabling unprecedented freedom of access to information from any computer, anywhere in the world, so long as it has a browser and access to the Internet. A more detailed examination of these forces and the changes they wrought may offer clues to future evolution.

In 1981, the Internet was born—the product of an effort that the Defense Advanced Research Projects Agency (DARPA) funded to develop a communications system linking isolated defense research projects. From that work sprang a scheme for enabling computers at geographically dispersed sites to establish connections over existing telecommunications media and to exchange data packets independently of the computer technology at each node.

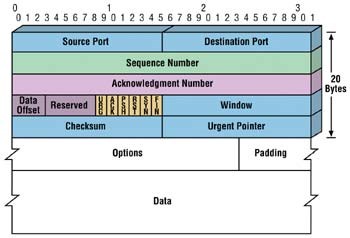

The scheme was the first instantiation of TCP/IP and the domain name system (DNS). TCP describes the mechanisms and format for encapsulating data packets into standardized, content-independent bit streams for presentation to the network. IP describes the mechanisms and format by which a sender discovers how to address and deliver a message to a specific receiver. DNS provides hierarchical listings of IP addresses, which are unique to specific network nodes. An IP address is a string of numbers and periods (nnn.xxx.yy.zz) that is mapped by a DNS to a user-friendly universal resource locator (URL) name (www.myaddress.com). Note that these definitions are considerably simplified from the more detailed and specific information available on the Internet. (See www.whatis.com.)

Through the late 1980s and early 1990s, Ethernet networks were only one of several competing technologies (primary among them being token ring and token bus) in the race to dominate office-environment networks.

Ethernet used carrier sense multiple access/collision detect (CSMA/CD) media access control. CSMA/CD means that when a node wants to send a message, it checks for a carrier already "on the wire"—to see whether another node is transmitting. If so, the node wanting to send a message waits for a time and checks again. When the wire is no longer in use, the node starts its own carrier and begins to transmit. Collision detection comes into play when two nodes try to transmit simultaneously. In that case, both stop transmitting and wait a randomly generated time, then try again.

The two primary Ethernet competitors used different varieties of token-passing access control. Both token technologies used some form of message priority modification to guarantee access to the network within some maximum time. The competing technologies may have been technically superior to Ethernet but, if so, that made little difference. Ethernet adopted TCP/IP, making remote data connections over the fledgling Internet as easy and transparent as local data connections. That fact, plus Ethernet's low cost for media and interfaces, rapidly prevailed in office environment information technology applications.

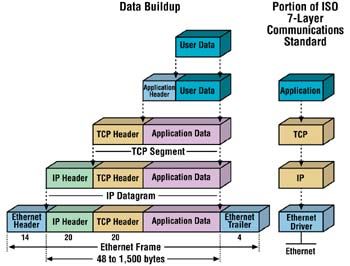

Figure 1 depicts TCP. Figure 2 offers a view of the encapsulation of data from the application to the Ethernet wires.

Another crucial addition to Internet technology—one that ultimately made the Internet an indispensable tool on virtually every office workstation and home computer—was the browser. Browsers, and the data format standards that enable them, provide a consistent, platform-independent, intuitive and powerful user interface.

Many Internet users are also familiar with some of the higher-layer application protocols that use TCP/IP to access the Internet. These include the Web's hypertext transfer protocol (HTTP); file transfer protocol (FTP); Telnet, which allows users to log on to remote computers; and the simple mail transfer protocol (SMTP). These and other protocols are often packaged with TCP/IP as a "suite."

In retrospect, the factors that made Ethernet a winner over token ring and token bus for office environments are obvious. Using low-cost media and interface electronics, all the computers in a single facility could be connected to transfer files and send messages. Any network node could even communicate with external domains without the need for format translation software. Additional functions quickly emerged for enabling remote connectivity for launching remote applications, and so forth.

A significant new software development sector emerged, focusing on standardized interfaces to ease application integration over TCP/IP-based networks. Over a relatively brief period, developers produced standardized mechanisms for using browser functionality to manipulate:

- Database records

- Secure connections for Internet-based commerce (secure shell, SSL)

- Dynamic Web pages where a framework is populated from database records

- Languages for platform-independent software programming (JAVA, ActiveX)

- Standardized object interfaces (CORBA, DCOM)

- And, perhaps most powerful of all, a new format for delivering data—extensible markup language (XML).

Industry groups are developing naming conventions for XML data, or "schema," for business applications. These schema will enable interoperability among business applications without the need for data format translations. (For those who want to know more, detailed explanations for the undefined terms and acronyms presented above may be found at www.whatis.com.)

Ethernet On The Shop Floor

The factors that made Ethernet an easy winner for office environments—low cost, high performance, transparent interface to the Internet and increasingly standardized software interfaces—are equally strong drivers in production environments. However, factory networks must meet different technical requirements.

Communications among programmable devices in a factory are peer-to-peer in nature whereas information technology networks are usually client/server based. Furthermore, factory networks linking programmable devices predominantly carry synchronization data and pass parameters or short messages. Such data packets are small and must meet often-stringent timing requirements because the systems they support run in real time. Failure to meet timing deadlines can have disastrous consequences that include risk to human health and safety. The technical term is that real-time systems must be deterministic.

Ethernet, because of its CSMA/CD interface, is not deterministic because there is no mathematically rigorous way to guarantee message delivery times. (See "Protecting America's Information Infrastructure" on page 39). Production environments are also very "dirty" from electrical noise and physical viewpoints. These issues bring into question the reliability of office networks used in an environment for which they were not designed.

These very valid concerns delayed factory adoption of Ethernet until about the mid 1990s, but again, technology evolution prevailed. Fiber-optic cable technology is far less susceptible to electrical noise, is readily available, and is relatively easy to use. Driven by technology advances, Ethernet baud rates have increased so that 100 megabaud is now common, and gigabaud Ethernet is emerging. Packet transmission time is now several orders of magnitude quicker than it was a decade ago.

Processor technology advancements have also dramatically boosted the processing bandwidth of network interface electronics and computer central processing units (CPUs), thereby reducing turnaround time, and with it, message latency. Combine all these advances, and the result is that many of the former barriers to factory use of Ethernet no longer exist, even though this technology is still not truly deterministic. (When turnaround and transmission times are brief enough, and total network traffic is low enough that the probability of meeting timing deadlines is high, then Ethernet use is likely to be acceptable, unless the deadlines in question involve safety.)

The first instantiations of shopfloor, Ethernet-connected, human-interface devices were really Intel-based personal computers (PCs) packaged for industrial applications. These quickly led to the development and implementation of factory intranets. For factory intranets, shopfloor human-interface services are usually browser-based and use Microsoft Windows functionality. PC-based control systems and embedded controllers with PC front ends (for example, CNC and robot controllers) emerged in parallel with those early implementations. There, Ethernet connectivity had such obvious advantages there was no room for a competing technology.

Innovative PC-based control and some programmable logic controller (PLC) suppliers have adapted Ethernet for use in connecting remote input/output (I/O) systems. Ethernet is now in use (in redundant configurations) for even "mission critical" applications such as distributed control systems (DCS) and supervisory control and data acquisition (SCADA) systems found in chemical plants and petroleum refineries.

The results are in, and the winner is clear. Except for the most critically demanding applications, the present and future factory network is Ethernet.

From Ethernet To Internet

The presence of Ethernet and TCP/IP in factory applications enables access to that Internet (or intranet) functionality on which we have become so dependent, both for our livelihoods and in our homes. By using TCP/IP and DNS services, two nodes anywhere in the world can establish a connection and exchange data. That is, they can unless they are prohibited from doing so by routing rules programmed into one or more of the many routing devices in the routing chain (IP works by using DNS entries to establish a chain of servers that forward data packets from sender to receiver).

That level of connectivity has provided immense benefits in the world of business systems, enabling location-independent access to information and integration of disparate systems, with and without human intervention. Similar benefits may be realized from factory implementations as well. However, certain issues must be addressed with caution.

In a production environment, standardized interfaces are different, although they provide about the same function. The programmable devices that provide factory automation and are linked by Ethernet invariably use proprietary data formats for their entire data structure.

Major suppliers of programmable devices are few, and they compete fiercely. All provide software specifications, and sometimes software packages, that encapsulate their proprietary formats as a layer above TCP/IP. Thus, a workstation connected to the factory network and using its operator interface or programming software can access all data on the entire control system.

In contrast, below the programmable device level, in the world of sensors and actuators, suppliers abound. Of necessity, the automation industry has defined standards for networks and device data structures. These, too, are usually encapsulated for use over the Ethernet.

The end result is that today, factory networks implemented using Ethernet and with a connection to the Internet offer transparent access to all the data in all the connected devices—unless, of course, the user controls that access in some way. Likewise, programming access to all programmable devices is also transparent unless the user restricts it in some way.

Transparent access to information from the shop floor to the boardroom, and from the customer to the end-of-product-life recycle center, enables many valuable functions. Among them are the following:

- Design engineering access to customer requirements

- Manufacturing engineering access to factory resource information

- Customer access to build, assemble and ship information (à la Dell Computer)

- Procurement visibility into bill of materials (BOM) requirements and component inventories

- Supervisory access to factory health and daily schedule progress

- Management access to work in progress (WIP), inventory and productivity information

- Operator access to equipment training manuals and instructions

- Maintenance access to equipment maintenance manuals and maintenance, repair, and operations (MRO) status

- Equipment vendor access to equipment health information and the ability to perform remote diagnostics

- Product design information available to end-of-product-life disposal decisions.

This list is by no means an exhaustive one. If one statement can be made with absolute certainty, it is that the clever imagination of application developers will outstrip even the most optimistic of forecasts.

The Down Side

To all the advantages and benefits that this new technology offers, however, there is a down side: The very openness on which the Internet relies also presents opportunities for misadventure, both deliberate and accidental.

Links to several examples of deliberate attacks are given on the Gas Technology Institute site at gtiservices.org/security/attacks.shtml. Among the examples are the following. In a well-documented attack in Australia, a disgruntled control system integrator penetrated the control system for a wastewater facility and released several million liters of raw sewage into waterways. A hacker (who, as it turned out, actually lived in Israel) penetrated the computer system for MIT's Plasma Science and Fusion Center, and was charged with attacking NASA, the Pentagon, and Harvard, Yale, Cornell and Stanford university systems as well.

Most incidents, likely more than 70 percent, are caused or aided by insiders (see "Can't happen at your site?" by Eric Byres, InTech, at www.isa.org/journals/intech/feature/1,1162,710,00.html). And most of those incidents are accidental—a manufacturing engineer intending to make a change in the program for PLC "xyz" instead logs into PLC "xzy" with disastrous consequences; an operator accidentally deletes a crucial file; and so forth. The primary point here is that factory Ethernet systems are subject to the same vulnerabilities, and perhaps a few more, as those in office environments. The consequences of an unauthorized penetration of a factory network can certainly be more serious in terms of human health and safety than penetration of an office network.

The Computer Emergency Response Team (CERT) of Carnegie Mellon University's Software Engineering Institute performs cyber forensics on reported incidents of intrusions and denial-of-service attacks. CERT statistics (www.cert.org/stats/cert_stats.html) show that from 2000 to 2001, reported incidents more than doubled (from 21,756 to 52,658), as did system vulnerabilities (from 1,090 to 2,437).

Users must treat the information security aspects of new factory Ethernet implementations with care. For existing installations, performing a vulnerability assessment would be good. Independent, sometimes self-directed assessment methodologies are emerging from national laboratories and other government-funded entities. For example, CERT has developed a self-directed assessment methodology (www.cert.org/octave/) that begins with the identification of critical information assets and concludes with a risk mitigation strategy and an action list.

What Does The Future Hold?

Could anyone have predicted, a decade ago, the explosive growth of the Internet? Network and computer bandwidth are forecast to continue advancing at about the same pace as in the past decade. On the other hand, with the Internet firmly established, and shop floor use expanding, a safe assumption is that the home and office successes will be applied in ways well suited to factory application. For example, the Intelligent Maintenance Systems consortium—a joint venture of the University of Wisconsin–Milwaukee and the University of Michigan that is funded by the National Science Foundation (NSF)—is developing practical, Internet-based systems that can predict equipment failure before it happens and use the Internet to order repair parts and schedule maintenance. In the future, we can expect much more. . . .

Nevertheless, security concerns will always be an issue because new systems are never perfect. The people who seek vulnerabilities will always find them. The people who then seek to patch them will do so also. Constant vigilance is the key to risk management.

About the author: Tony Haynes is director of manufacturing services at the National Center for Manufacturing Sciences.

Read Next

3 Mistakes That Cause CNC Programs to Fail

Despite enhancements to manufacturing technology, there are still issues today that can cause programs to fail. These failures can cause lost time, scrapped parts, damaged machines and even injured operators.

Read MoreThe Cut Scene: The Finer Details of Large-Format Machining

Small details and features can have an outsized impact on large parts, such as Barbco’s collapsible utility drill head.

Read More

.png;maxWidth=300;quality=90)

.png;maxWidth=300;quality=90)