AI Demands Meaningful Metrics

Machine learning can identify patterns and trends faster than any human, but correlations are only as meaningful as the data driving the analysis.

Mark Lilly, president and CEO of software developer LillyWorks, says his company is onto something. “What we have here could be as big as the invention of MRP (materials resource planning) or the creation of finite scheduling,” he says.

Managing workflow is just one function of the LillyWorks’s cloud-based enterprise resource planning (ERP) software. However, leadership sees enough promise in the roughly two-year-old Production Flow Manufacturing (PFM) scheduling system to offer it as a standalone module that integrates with any other ERP software. As the user base expands, the company has been investigating how machine learning might expand that promise by making the system’s predictive analytics capability more precise, more automated and broader in scope.

Predictive analysis is nothing new, and LillyWorks is not the only manufacturing software developer investigating machine learning. However, Mr. Lilly insists that PFM provides a particularly strong foundation for machine learning because PFM works differently than traditional scheduling systems. Specifically, he argues that the impact of machine learning depends on the quality of the dataset it analyzes, and that PFM data is more useful for ensuring any trends or patterns revealed by this technology are truly meaningful.

This is largely because the PFM dataset itself is more meaningful, at least in the sense of being relevant to the real-world problems of manufacturing schedulers. Most manufacturers create a strict schedule by loading capacity based on due date. However, due date alone is insufficient for setting shopfloor priorities. “Invariably, a shop will have jobs due, say, two months from now that are more likely to be late than jobs due just two weeks from now if they aren’t started immediately,” says Scott Filiault, vice president of operations/strategy at LillyWorks.

To avoid such problems, PFM focuses on answering one key question before all others: Which tasks are most important now to keep work flowing smoothly? To that end, the system prioritizes operations according to a system of “threat levels” assigned to each job. Threat levels indicate the level of risk that jobs will be late, and they change with time to ensure the most urgent work always gets the most attention.

This system is explained in more detail in this article, which details PFM’s application at a CNC machining business in Ohio. Suffice it to say, planning comes later, once a crew learns to follow PFM’s often unintuitive priority system. “This approach flips the traditional shopfloor production model on its head,” Mr. Lilly says. “PFM focuses first on priority execution, then on planning, rather than trying to create a plan and then execute it.”

The tool used for later planning is aptly named “The Predictor,” a software tool that uses PFM’s threat-level data to predict future workflows. Within the Predictor’s projections are the kinds of answers manufacturers need to plan. For example, which workstation will be the most significant bottleneck in three weeks? How late will other jobs be if I accept work from a new customer? “Answering questions like these helps determine how to improve throughput, whether that means expediting materials, adding overtime, changing orders of operations or anything else,” Mr. Filiault says.

The Predictor’s projections are believable because they are based on the reality of how jobs will be executed according to what their threat levels will be in the future, down to the level of the individual operation. As a result, including this data along with traditional scheduling data (inventory levels, processing times, orders of operations and other ERP metrics) provides deeper, more precise insight into one’s process. This is the case whether analysis is performed with the aid of a traditional software tool like the Predictor or driven by machine learning algorithms.

One difference between the two is that machine learning promises to automate analysis that hard coding can only augment. Although suggesting specific courses of action based on the results of similar situations in the past is a step beyond the current capabilities of The Predictor, machine learning could change that.

Another is that the scope of any machine-learning analysis is much wider than anything possible with hard-coded software algorithms. Able to accommodate unfamiliar data without software updates, machine-learning driven systems can seek correlations and patterns with data from sources outside PFM and ERP. Such sources could range from machine tool sensor feeds to probe measurements and financial or inventory records. In an extreme case, a system might comb online news feeds; link a material shortage to political unrest in another country; and recommend purchasing (or even autonomously purchase) materials from an alternative source.

Chances are that most software developers’ immediate goals are far less ambitious. Still, Mr. Lilly and Mr. Filiault insist that PFM provides an advantage in ensuring the dataset presented for analysis is relevant and useful to solving the real-world problems at hand. This, in turn, will help ensure correlations and patterns identified by machine learning are not just precise, but meaningful.

“Machine learning might predict that jobs are more likely to finish on time if the operator is wearing black shoes,” Mr. Lilly explains. “Maybe that machine learning model even has a high confidence level in its predictions. But are you prepared to rely on a predictive model that’s basing correlations on information that you don’t believe to be relevant, or isn’t transparent enough to understand?”

For a look at how one machining business benefits from the software’s existing predictive analytics capability, read this article.

Related Content

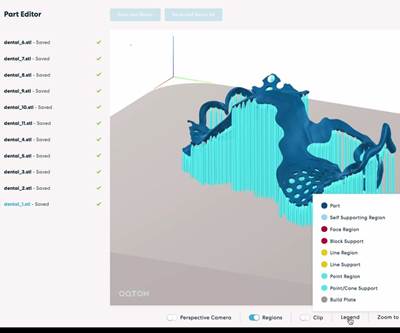

Beyond the Machines: How Quality Control Software Is Automating Measurement & Inspection

A high-precision shop producing medical and aerospace parts was about to lose its quality management system. When it found a replacement, it also found a partner that helped the shop bring a new level of automation to its inspection process.

Read MoreWireless Couplers Work Wonders for Workholding

Possibilities range from individual control of chuck jaws and tombstone fixtures to more reliable robots.

Read MoreDigital Twins Give CNC Machining a Head Start

Model-based manufacturing and the digital thread enable Sikorsky to reduce lead times by machining helicopter components before designs are finalized.

Read MoreMachine Monitoring Boosts Aerospace Manufacturer's Utilization

Once it had a bird’s eye view of various data points across its shops, this aerospace manufacturer raised its utilization by 27% in nine months.

Read MoreRead Next

AI Makes Shop Networks Count

AI assistance in drawing insights from data could help CNC machine shops and additive manufacturing operations move beyond machine monitoring.

Read MoreManufacturing Scheduling System Keeps Shopfloor Priorities Straight

Focusing on the now rather than adhering to a plan improves throughput, allows for variation and lays a strong foundation for predictive analysis.

Read More10 Takeaways on How Artificial Intelligence (AI) Will Influence CNC Machining

The Consortium for Self-Aware Machining and Metrology held a promising first meeting at the University of North Carolina Charlotte. Here are my impressions.

Read More

.png;maxWidth=300;quality=90)