Sleepless in the Gage Business

Sometimes you have to review all parts of the measuring process to find the source of a problem.

It’s one of those stories that sends shivers down the back, causes sleepless nights and maybe even the gain of a few pounds for us old timers in the dimensional gaging business. We ship a gage that we know looks great. It’s been designed to be used on the shop floor and has passed all the calibration and repeat testing that has been specified for the application. Then, a little while after it gets into the user’s facility, the call comes.

The gage has been rejected. It’s not working well, doesn’t seem to repeat on the parts, and the operators have lost confidence in the system. After some support calls, complaints about the perils of shipping and numerous emails sent back and forth with rising panic from the manufacturer that it needs to inspect parts, the decision is made. We will bring it back. We tell the customer to send it with the masters and the parts being measured, and we will make it right. It’s an apparent black eye.

At times like this I pull out a little scrap of paper that I keep in my wallet. (Seems I pull it out more often than I want.) It’s pretty wrinkled now, and the corners are shredded. Written on it is one word: SWIPE. That’s our acronym for defining the measuring process: standard, workpiece, instrument, people and environment.

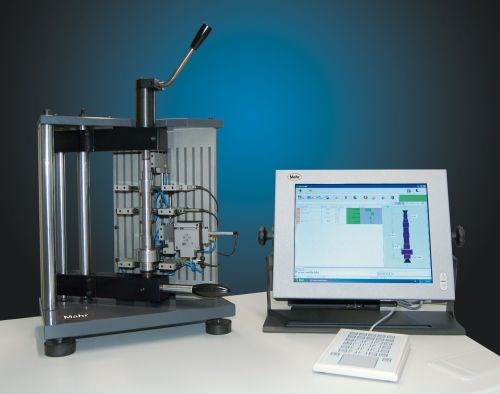

The two things that the gage manufacturer can control are the first ones we check out: the standard and the instrument. The master is clean, no rust or nicks, and the certification is original. Then we set up the gage and inspect it. Looks like it survived the travel OK. Things are properly aligned; everything is tight. It’s clean and there is no damage to the contacts or reference anvils.

So on goes the master, and the gage is zeroed. After a quick repeat study on the master that involves measuring it 30 times, things look good. The repeat on the master is less than 4 percent of tolerance, which indicates we should easily pass a GR&R test. I’m starting to feel a little better.

Two of the five parts of the SWIPE process are working well.

In our experience, the environment seems to be one of the largest sources of errors in the measuring process, dirt and oil being the major contributors. However, this customer’s work area is reported to be clean, and the parts were dry and clean when received. Maybe dirt is not the problem. Temperature is a big factor in the environment, but usually it will show up in short- or long-term drift errors. This is because it takes time for the gage to change with any sudden or slow environmental change. This is not the customer’s issue. It could be parts that are warm coming off a machine, and the gage is actually detecting the size change as the part cools down. But again, the customer is measuring a lot of parts that are far from the point of manufacture. Apparently, environment can be crossed off the list.

Next are people—the ones actually operating the gage. With comparative gages like the one in question, a lot can be done to ensure that parts go into the gage the same way every time. Guides, backstops and even spring loading can be employed to ensure hands-off measuring, eliminating variation by the operator as much as possible. But all these standard gage design criteria were employed with this gage, and with multiple operators inserting the master into the gage, it was repeating well. It does not appear that the operator can influence the part measuring to any large extent.

Then there are the workpieces. A quick look at these and the lightbulbs start to come on. The parts are in need of some good finishing. The surfaces appear roughly machined, not nearly what one would expect for this type of part. So, before the first part goes onto the gage for testing, the whole lot of parts goes to the inspection room to get measured for diameters, roundness, squareness and surface finish—all the dimensions to be checked by the gage.

The results aren’t too pretty. The parts show significant roundness variation (over a short span), significant out-of-square conditions, and some large surface and profile conditions. Looking at the results, it is clear that the gage has the capability of reading these variations. Is the gage just reporting what it’s “seeing?” Even without placing a part in the gage it’s obvious that there is going to be significant variation within each part. The gage is doing its job.

And that’s exactly what appears to be the issue. The gage is measuring and reporting the variation in the part. There’s one way to try to eliminate the part variation during the testing process of the gage—place an orientation mark on each part. With this, the gage will always measure at the same point on the part and report the same relative value every time. With some parts, however, even this technique has its limits. If the roundness is so significant or the profile so bad, even small positioning errors will show the variation and appear to show bad gage performance. But it’s still the parts that are causing the issue.

In this case, we trade a few more phone calls, exchange some measurements and some tips about part alignment, and the gage owner is reluctantly convinced. The company was not thrilled to learn the condition of its parts, but that’s another story. I’m sure there is some machine tool builder or insert company getting a phone call—and losing some sleep over these same parts. But here, the weight has been lifted.

Read Next

The Cut Scene: The Finer Details of Large-Format Machining

Small details and features can have an outsized impact on large parts, such as Barbco’s collapsible utility drill head.

Read More3 Mistakes That Cause CNC Programs to Fail

Despite enhancements to manufacturing technology, there are still issues today that can cause programs to fail. These failures can cause lost time, scrapped parts, damaged machines and even injured operators.

Read More

.png;maxWidth=300;quality=90)