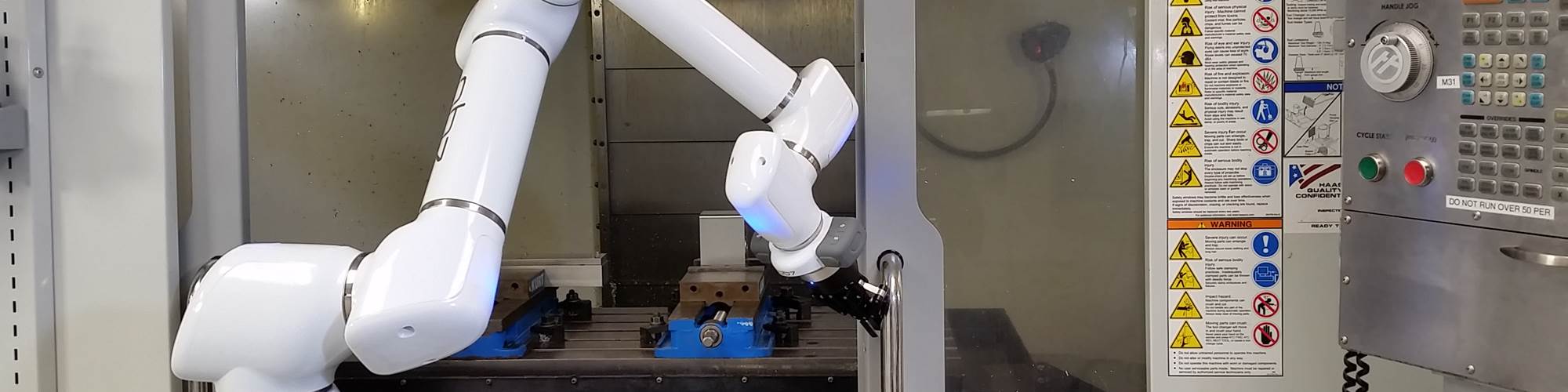

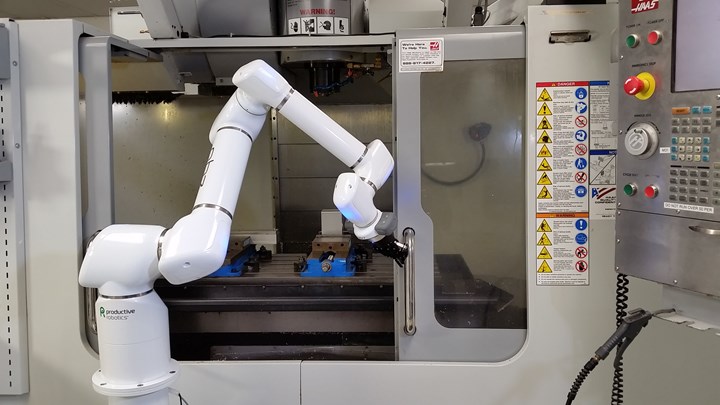

Productive Robotics’ OB7 “learns” its path from simple teaching routines. Other features, such as a seventh axis that enables the arm to reach around doors and other objects, add flexibility in applications such as CNC machine tending.

Robots don’t always move like robots. For example, Productive Robotics’ collaborative OB7 glides smoothly through its routine, exhibiting none of the snappy awkwardness that once characterized virtually all robotic automation. Watch closely, and you’ll also notice that the arm moves slightly different from the way the human manipulated it during the teaching process. “We show the robot where to go, but it figures out its own way to get there,” says Zac Bogart, CEO.

Although such technology could justifiably be called “smart,” the OB7 need be only so smart as to eliminate the need for traditional robot programming. “We use ‘smart’ algorithms to plan and govern the motions, but we don't apply learning or neural networks or anything like that,” Mr. Bogart says.

For CNC machine shops, such distinctions are becoming more than academic. Words like “smart,” or even the more specific “artificial intelligence” (AI), are broad, catch-all terms that could refer to any number of approaches to replicating human thinking. As this technology reaches shop floors, questions about the extent to which a technology is intelligent, what kind of intelligence it employs, and how that intelligence might be applied are becoming more important.

Robotics is just one area in which this is the case, but examples that recently appeared in MMS make some of these questions easier to answer. They also illuminate both the promise and the limitations of a seemingly limitless technology. One was the subject of my last column: an intelligent robot control system called MIRAI. Developed by Micropsi Industries, MIRAI uses machine learning, cameras and force sensors to provide robots with what is essentially hand-eye coordination.

If the socket moves, the robot will follow.

More specifically, MIRAI uses imitation learning. That is, the user manipulates the arm through the task to be automated, approaching the target from as many angles and positions as possible. Meanwhile, a multi-camera vision system watches, and the neural network learns. The result is not a “path,” like the one followed by the OB7. Rather, the system’s algorithms focus entirely on what it looks like to achieve its task, such as “insert plug into socket.” If the socket moves, the robot will follow, guided by both real-time force and sensor feedback as well as what it has learned about the requirements to achieve the desired end state.

However, machine learning has limits in robotics, particularly in manufacturing. This brings us to a second example of an AI-driven robotic system: Freemove from Veo Robotics. Veo’s goal is ambitious: to make all robots collaborative by eliminating the need to compromise speed and power for the sake of safety. Similarly to MIRAI, the system employs artificial senses to perceive its environment and artificial intelligence to react to it. However, Veo’s AI offers only one potential reaction to its environment: to slow (to a stop if necessary) in order to avoid harmful contact with anything that might be a person. Its sole aim is to answer the question, “When, where and how fast is it safe to move?”

To this end, the system uses object classification and tracking algorithms, as well as speed and separation monitoring technology. Essentially, that means it labels the objects around it — that is, it differentiates the workpiece, fixture and so forth from hazards that could be people — and predicts all possible positions of potential hazards into the future. The system performs these complex calculations fast enough to intervene whenever sensors reveal a high enough chance of prediction becoming reality.

This is an approximation of human intelligence, but it is not machine learning. A robot with Freemove does not make its own path, improve with time or otherwise “learn” anything new. However, for this application, such capability is not just unnecessary, it is also ill-suited. “Even very good machine learning could have one fail in 10,000,” said Patrick Sobalvarro, co-founder of Veo, in the June issue of MMS. “That’s not good enough when human safety is involved.”

The appropriate level of “smarts” will be a more critial question as technology evolves. Consider that MIRAI lends its finesse for only a few moments before returning control to the program. The prospect of similar decision-making on a higher level brings up a key philosophical concern.

I didn’t know at the time that I’d be quoting him in this context, but Mr. Bogart articulated this concern well during an email exchange about the OB7. “Once the robot has proven to perform a particular task (including movements), it is important that it do it the same way every time,” he wrote. “If it ‘learns’ what it thinks is ‘better,’ it's not acceptable in a manufacturing environment to change that without a proper human review. Continual variability is the enemy of a stable manufacturing process.”

AI is a tool, and it is not one-size-fits-all. As long as this is the case, the intelligence that matters most will be the human smarts behind technology research and investment.

Related Content

Shop Moves to Aerospace Machining With Help From ERP

Coastal Machine is an oil and gas shop that pivoted to aerospace manufacturing with the help of an ERP system that made the certification process simple.

Read MoreCan Connecting ERP to Machine Tool Monitoring Address the Workforce Challenge?

It can if RFID tags are added. Here is how this startup sees a local Internet of Things aiding CNC machine shops.

Read MoreSwiss-Type Control Uses CNC Data to Improve Efficiency

Advanced controls for Swiss-type CNC lathes uses machine data to prevent tool collisions, saving setup time and scrap costs.

Read MoreHow to Grow the Business with Real-Time Job Status Data

ERP systems that focus on making data more accessible can improve communication within a shop, reducing wasteful errors and improving capacity.

Read MoreRead Next

Robots Get Hand-Eye Coordination

Artificial vision, touch and intelligence help collaborative robots cope with the unpredictable.

Read MoreCollaborative Robots Learn to Collaborate

Accessible 3D vision unlocks the potential of machine learning for making our autonomous partners more humanlike.

Read MoreCan Vision and Artificial Intelligence Make Every Robot Collaborative?

That is the aim of this Boston-area startup. Last year, it came to market with technology to make even fast and powerful industrial robots safe to approach. The technology promises to eliminate the need for guarding around them — safety measures that might not be as safe as you think.

Read More