A.I. Will Become Our Inventor

We know how to engineer automation, but can we automate engineering? This possibility is nearer than we might expect, and it will have implications for manufacturing.

Share

Neural networks are not scary.

Or so says Conrad Tucker, associate professor of engineering at Penn State University. Neural networks—artificial intelligence (A.I.) systems using advanced computing to aid in problem-solving—are tools that will eventually become accepted and widely employed by engineers. He is part of a team of researchers that recently won a $900,000 grant from the U.S. Department of Defense for a project that will train a computer system to invent. If this work realizes its aim, it will impact manufacturing because of the role manufacturing plays in invention.

Granted, if you need to claim something isn’t scary, then it must be kind of scary. But Dr. Tucker points out that we already interact with neural networks routinely. When our search engine returns give the web page we are looking for even after we mistyped the term, or when suggested purchase items on Amazon are eerily accurate to our tastes, this is the work of a neural network. Why can’t engineers use this same kind of network to obtain engineering designs that are eerily close to the optimal solution to a given problem?

The name of the project describes what it will do. GANDER aims to use “Generative Adversarial Networks for Design Exploration and Refinement.” The “adversarial” networks here are a generator and a discriminator. As the “generator” network proposes engineering ideas, the “discriminator” network shoots them down based on their fitness for physical reality. The generator then employs machine learning to grow from this feedback. Eventually, the generator will become so good at passing the discriminator’s reality tests that it will propose engineering designs that represent very effective solutions.

All the steps in realizing this vision are huge, because machine learning works through a mind-bogglingly large volume of data and calculations. Thus, the hope of automatic design is still a way off. Step one is to train the discriminator, and the grant funds this work. Many, many CAD models will be fed to a system that computationally subjects these part forms to a range of simulated physical environments to gage how the forms respond. Charting a comprehensive map of physical reality in this way might seem daunting, but Dr. Tucker says we have seen this kind of mapping before. Indeed, he lives with an active neural network that isn’t scary at all, but endearing.

“I have an 18-month-old son,” he says. “He is learning all about the world by exploring and testing it, and in part, he relies upon me to give him feedback. This is the same model at work: one agent generating ideas and the other agent constraining them.” Our computers can now follow the same model because they can now carry out the computations required at a useful speed.

The most immediate practical implications are liable to connect to additive manufacturing, Dr. Tucker says. 3D printing can help speed the networks’ learning by generating proposed forms for testing physically. And as part of mapping reality, the discriminator can map the constraints of the different 3D printing systems in order to propose designs that are optimized to these systems.

But the broader change for manufacturing will come in the way we invent. Today, we find our way to engineering solutions in much the same way as Dr. Tucker’s son, through physical exploration. We call these explorations “prototypes,” and a considerable share of the work of manufacturing is prototyping in some fashion. But in the future—maybe the far future, or maybe in 10 years—much of this work will be done computationally, with A.I. helping us to more quickly understand which ideas are ready for production.

Related Content

Shop Reclaims 10,000 Square Feet with Inventory Management System

Intech Athens’ inventory management system, which includes vertical lift modules from Kardex Remstar and tool management software from ZOLLER, has saved the company time, space and money.

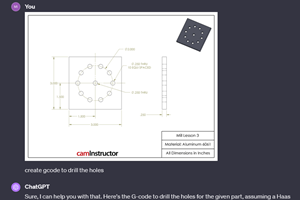

Read MoreCan ChatGPT Create Usable G-Code Programs?

Since its debut in late 2022, ChatGPT has been used in many situations, from writing stories to writing code, including G-code. But is it useful to shops? We asked a CAM expert for his thoughts.

Read MoreOrthopedic Event Discusses Manufacturing Strategies

At the seminar, representatives from multiple companies discussed strategies for making orthopedic devices accurately and efficiently.

Read MoreCutting Part Programming Times Through AI

CAM Assist cuts repetition from part programming — early users say it cuts tribal knowledge and could be a useful tool for training new programmers.

Read MoreRead Next

January Issue of Additive Manufacturing Pairs AM with Machine Learning

The January issue of Additive Manufacturing magazine looks at three ways machine learning is advancing understanding of 3D printing processes and materials.

Read MoreOEM Tour Video: Lean Manufacturing for Measurement and Metrology

How can a facility that requires manual work for some long-standing parts be made more efficient? Join us as we look inside The L. S. Starrett Company’s headquarters in Athol, Massachusetts, and see how this long-established OEM is updating its processes.

Read More

.jpg;maxWidth=300;quality=90)